Looking at code an LLM generated for you, you often can't really tell which of the small decisions about edge cases, error handling, and behaviour choices were deliberate and which the model just guessed at confidently or accidentally. For a throwaway script that does its job and gets deleted, none of that matters much. But for code that needs to be maintained, or that I want a colleague to be able to read in six months and contribute to, those silent decisions become the actual problem.

Code someone typed by hand at least has fallbacks. You can ask the author, or read the git review thread, or lean on someone on the team who was there at the time. Code an agent generated has none of that. The chat is gone, the agent doesn't remember the project, and nobody on the team has any real opinion on why a particular loop is structured one way rather than another. So the spec ends up being the only channel that survives, and it has to carry more weight than the same spec would for code people wrote themselves.

As more code starts coming out of agents, the response from most teams has been to reach for ever better ways of nudging those agents toward producing the right thing, whether that's an AGENTS.md at the repo root, a .cursorrules file, a Claude skill, or a plugin with its own little instruction set. These describe how the agent should behave when it generates code, which is a different question from what the code should do. None of them is the file you'd open and edit when the requirements change. A spec is.

Plan, but not every detail

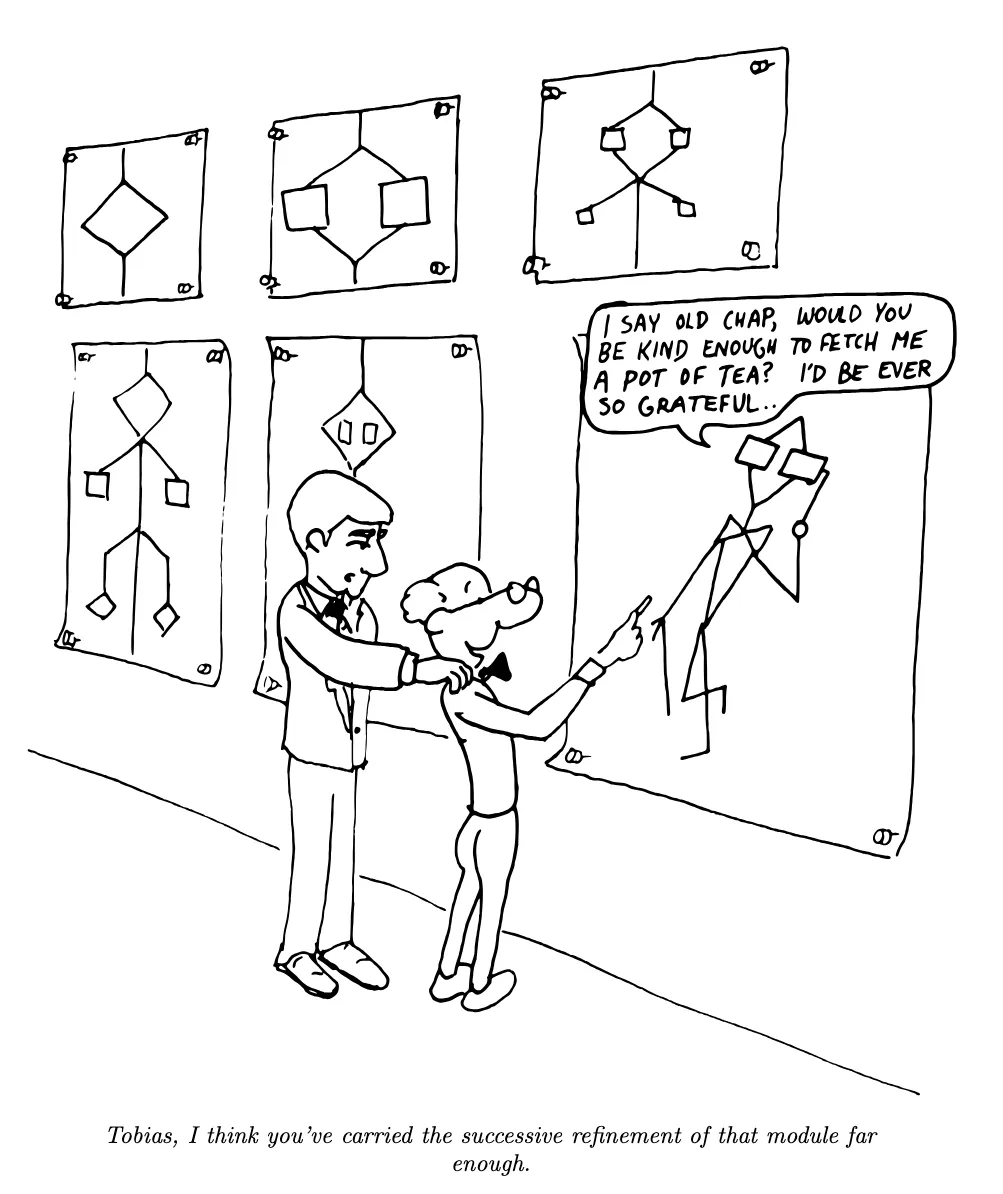

One of my favourite reads when I first picked it up was Leo Brodie's Thinking Forth, and the intro section "An Armchair History of Software Elegance" is something I've often recommended even to non programmer friends who wanted to understand how programming evolved and why. Brodie names a tension that anyone who has worked under a "write no code until you have planned every last detail" rule will recognise, since elsewhere in the same book he warns that "an overindulgence in planning is both difficult and pointless," because human foresight is limited and the more you try to predict the further you drift from what actually happens. The middle ground he points toward is iterative work where you write down enough to know what the program is for, without pretending to foresee every line before any code exists. "Tobias, I think you've carried the successive refinement of that module far enough."

"Tobias, I think you've carried the successive refinement of that module far enough."

Cartoon from Leo Brodie, Thinking Forth, used under CC BY-NC-SA 2.0.

That tension is roughly what specs become for AI-generated code. The spec has to be detailed enough that the agent doesn't have to invent the parts you actually care about, but it shouldn't be a thousand-line waterfall document trying to predict every branch. In Ossature, the audit step happens before any code is generated, so ambiguity and contradiction surface while the cost of fixing them is still an edit to the spec rather than a refactor of generated source.

Not every tool that uses the word "spec" works this way. Kiro and SpecKit walk you through a structured workflow at the start of a feature and hand the implementation off to an agent, and from there the spec mostly stops being touched and tends to drift out of sync within a handful of iterations. That can be a useful pattern for getting a single feature off the ground, but it's a different shape of workflow from one where the spec is the source of truth, the only thing the human edits, and the code is regenerated from it as needed.

When the spec is what you edit

Once you treat the spec as the thing you maintain instead of the code, the trade-offs change. Every change becomes an edit to the spec and a regeneration of whatever that edit affects, and with a build system that hashes inputs and only rebuilds what actually changed, this stops being expensive even on projects with multiple modules and dozens of tasks. Ossature handles this with per-task input hashes and interface files between specs that behave a lot like header files in C, so an internal change that doesn't alter the public interface doesn't cascade. After the first build, iterations end up feeling more like fixing a typo and rebuilding than regenerating from scratch.

I think the case for spec-driven development (where specs are the source of truth) gets clearer the longer you live with the alternative, and will continue to get more attention as long as AI generated code continues to make its way into every aspect of software development process. At this point you've probably seen many projects/codebases that were built entirely or largely by agents (GitHub is full of them), they all tend to fail in similar ways. The code works more or less, but nobody quite knows why, the details of why a certain approach was taken don't exist clearly anywhere, and any non-trivial change becomes risky even for the maintainers themselves because there's no record of what was deliberate in the first place. Specs are probably not the fix for everything, but they are somewhere to put the things that would otherwise live nowhere.